Is My Ed Tech Tool Making a Difference?

An Entrepreneur’s Guide to Using Research to Improve Products and Measure Impact.

By Tonika Cheek Clayton and Cameron White

Every ed tech entrepreneur wants to develop an amazing tool that may one day become a household name. At NewSchools, we invest in ventures that also care deeply about impact. Through our ed tech accelerator NewSchools Ignite, we help entrepreneurs use research to generate data that can inform their product and business strategies.

Based on these experiences, we’ve developed a guide designed for entrepreneurs at any stage of their research journey. This web interface pulls together many of the main points for a quick read. The full guide is also available to download as a PDF.

Our guide examines a range of research types, noting those that are appropriate at various stages of development, on small or large budgets, and within varying timeframes. We believe it will also offer useful information for educators and funders.

Key Research Practices Explored in This Guide

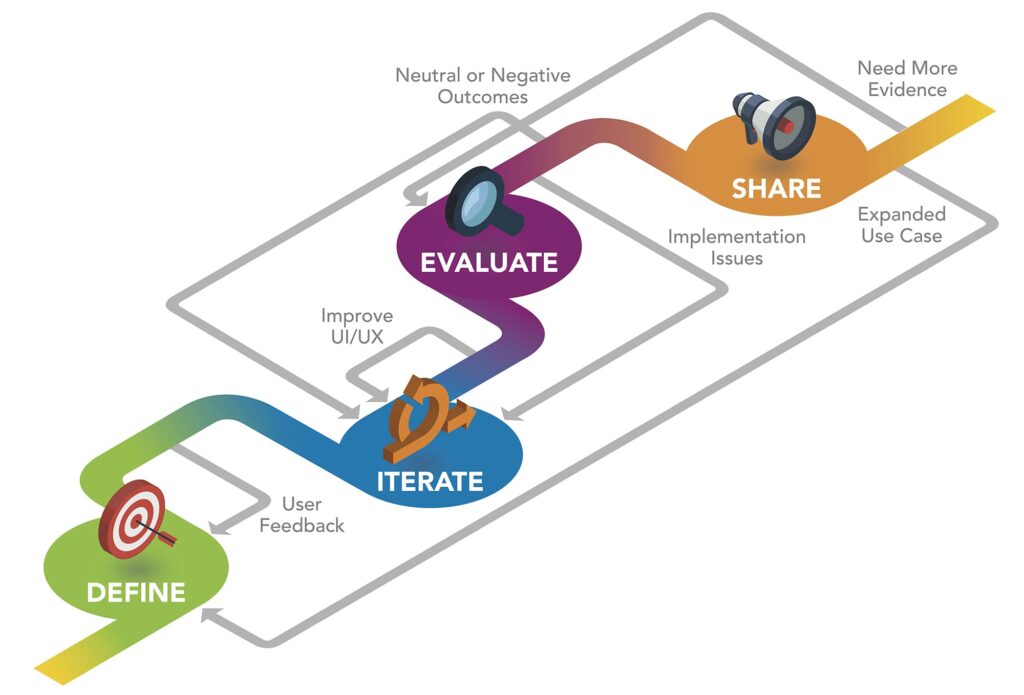

Define the intended impact and create a logic model.

Identify the student outcomes you believe your product supports, document how use of your product supports these outcomes, and gather feedback on your ideas from a diverse group of stakeholders.

Iterate based on feedback from usability and feasibility testing.

Observe how the product is used in a real setting by teachers and students, and refine product features and supports based on what you learn.

Evaluate evidence of student outcomes.

Develop research questions and timelines that align with your product and business roadmaps. Be mindful of key considerations including product stage, costs, and return on investment.

Share what you learn about impact – both the celebratory insights and the tough lessons.

Synthesize different types of evidence to describe how your product can support student outcomes, focusing on key audiences like educators and funders.

Many types of research have value, and the entrepreneur’s research journey is not linear. Research practices don’t always fall perfectly into a sequential order, and each can have value as an independent undertaking.

So, how can an ed tech entrepreneur check in and collect evidence of impact in the midst of an iterative product development cycle? Sequencing and timing are important. Conducting an efficacy study before having confidence about how a product is being used in classrooms is probably not the best plan. However, it’s crucial to keep student outcomes top of mind, even at an early stage.

Through our ed tech accelerator NewSchools Ignite, we invest in tools that support student learning and integrate research services into our investment strategy. We identified two external research partners to support our ventures. WestEd conducted product reviews and small-scale studies, and Empirical Education offered student user demographic reports. We build the cost of their work – an average of approximately $40,000 per investment – into our venture support. The content and lessons learned in this guide emerged in part from this work, as well as from conversations with ed tech researchers, entrepreneurs and funders.

NewSchools Ignite launched six ed tech challenges from 2015 to 2018, focused on products addressing critical student needs in Science Learning, Middle and High School Math, English Language Learning, Special Education, Early Learning, and the Future of Work. Through these challenges we funded 84 small-scale research studies conducted by WestEd, primarily focused on generating formative product feedback.

For ed tech ventures outside our portfolio, we recognize this level of early-stage research may be cost prohibitive. In recent years, several organizations have explored the potential value of“rapid-cycle evaluation,”1 designed to “quickly determine whether an intervention is effective” while also enabling “continuous improvement.” Yet even this type of research requires significant financial and human capital resources, so it’s important to consider its costs and benefits as part of a sustainable research strategy.

Key Research Practices Explored in This Guide

These research practices are explained in greater detail in the sections below.

The first step in the research process is defining the student outcomes you believe your product can support. Next, developers can begin to consider short- and long-term metrics related to these intended outcomes.

Across NewSchools Ignite’s first six challenges, we observed a range of intended impacts including various academic, social-emotional, and career and college-ready indicators.

After defining intended outcomes, ed tech developers can begin to outline how access to and use of the product is connected to these outcomes. By explicitly defining potential use cases, the developer makes clear what is required to access the product, and how it should be used in order to achieve the desired results.

Considerations of access, use and outcomes can then be formally documented through a logic model, which describes a product’s “theory of change” through the lens of potential inputs, activities, outputs, outcomes and impact.

“Logic Models: Ensuring Your Product has Impact” Source: WestEd

Summary of research studies focused on formative feedback

| Study Type & Cost Range* | Sample and setting | Potential research questions | Potential funding resources |

|---|---|---|---|

Usability Usability studies test whether the core features of the product are usable by the intended end user. $1,000-20,000 | 1:1 user testing (e.g. students, teachers, and/or parents) in a controlled lab setting | Is the product intuitive and easy to use? Are users able to use the product’s features as intended? | Self-funding Foundations and impact funders |

Feasibility Feasibility studies test whether the product can be used at scale by the intended end users in an authentic educational context. $20,000-60,000 | A complete product implementation is tested in authentic learning environments (e.g. classrooms) | How do students/teachers use the product in the classroom? What support materials and guides can be provided to help facilitate use in the classroom? What are the barriers to classroom implementation? | Self-funding Foundations and impact funders |

* – Study costs vary widely and depend on a range of factors including: study length, sample size, types of data collected, measurement tools used, hardware costs, types of analysis performed, staffing needs, travel costs, and researcher salaries.

Usability and feasibility testing can provide valuable information about potential improvements, which can be integrated into a venture’s product roadmap and value proposition. implementation.

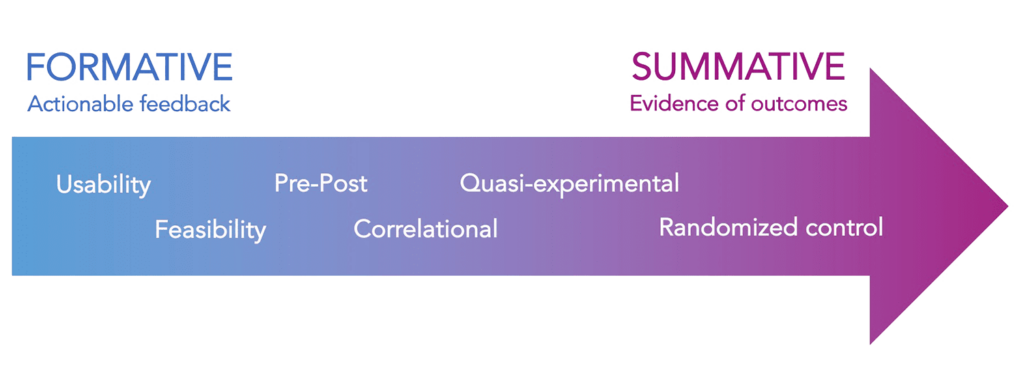

Compared to summative research, which focuses on measuring outcomes, formative research is meant to gather data that can inform product and business strategy, and is relatively inexpensive and low-risk.

Across studies of products funded through NewSchools Ignite, evidence suggests making products easier to use can have a positive impact on student learning.

As the product matures, if it is performing well, there should be positive indicators that suggest it is worth investing more time and money into the product, including more rigorous research. At NewSchools, we define “rigorous evidence” as a randomized controlled trial (RCT) or quasi-experimental design (QED) study, conducted by an external researcher, that demonstrates positive student outcomes. The federal K-12 education law, Every Student Succeeds Act (ESSA) also stipulates that studies meeting its evidence standards must be “well-designed and well-implemented,” which places additional requirements on the study design.2 That being said, many types of evidence have value

2 U.S. Department of Education (2016). Using Evidence to Strengthen Education Investments. Retrieved from https://ed.gov/policy/elsec/leg/essa/guidanceuseseinvestment.pdf

Research Continuum

As rigor increases, so generally does the cost of research. While investment in ed tech continues to grow, there will likely be additional resources available for research, but entrepreneurs need to be able to make the case that research is aligned with product and business goals.

Summary of research studies focused on measuring student outcomes

| Study Type & Cost Range* | Prerequisites | Potential returns on investment | Potential funding sources |

|---|---|---|---|

Pre-post or Pre-post studies examine changes in an outcome measured before and after an intervention. Generic controls Generic controls studies compare performance results (not growth) of a treatment group to nationally or regionally accepted benchmarks or proficiency goals. $10,000-50,000 |  Evidence of usability and feasibility | Communicate potential impact to school and district decision-makers Create evidence base that makes future research grant applications (e.g. ED/IES SBIR) more competitive In some designs, surface feedback that can drive product improvement | Self-funding Foundations and impact funders |

Correlational Correlational studies examine whether changes in one variable correspond to changes in a second variable. (statistical controls) or Statistical controls are methods (e.g. multiple regression analysis, fixed effects, propensity scoring) that compare treatment group performance to that of an “equivalent” population. Randomized control Randomized control studies randomly assign participants to an intervention or control group, in order to measure effects of the intervention while minimizing bias and other external factors. (underpowered) Underpowered RCTs have a lower probability of detecting an effect on student outcomes. $45,000-250,000 |  Preliminary evidence of positive student outcomes  Fidelity of product implementation  Evidence of usability and feasibility | Communicate potential impact to school and district decision-makers (including those that require alignment with ESSA standards) Create evidence base that makes future research grant applications (e.g. IES Goal 3) more competitive Feedback to tweak product/implementation in preparation for more rigorous/expensive studies | Self-funding Foundations and impact funders Federal grants |

Quasi-experimental Quasi-experimental studies compare outcomes for intervention participants with outcomes for a comparison group chosen through methods other than randomization. (well-matched comparison groups) In a strong QED, the comparison group will be close to a mirror image of the treatment group. $27,000-800,000 |  Preliminary evidence of positive student outcomes  Fidelity of product implementation  Evidence of usability and feasibility | Possible inclusion in What Works Clearinghouse “with reservations” Create evidence base that makes future research grant applications (e.g. IES Goal 3) more competitive Feedback to tweak product/implementation in preparation for more rigorous/expensive studies In some designs, possible to compare performance among demographic subgroups | Self-funding Foundations and impact funders Federal grants |

Randomized control Randomized control studies randomly assign participants to an intervention or control group, in order to measure effects of the intervention while minimizing bias and other external factors. (fully powered) Fully powered, well-designed and well-implemented RCTs provide the highest degree of confidence that an observed effect was caused by the intervention. $3,000,000+ |  Evidence of usability and feasibility | Differentiation - very small percentage of products offer evidence that meets this standard Possible inclusion in What Works Clearinghouse “without reservations” “Gold standard” of education research | Federal grants |

Potential outcome statements and descriptions by study type

| Study type | Potential outcome statements* | Potential description of sampling methods and data collection techniques |

|---|---|---|

| Usability or Feasibility | “[Venture] worked with [researcher] to understand user perspectives and integrated [user] feedback to improve product usability and/or feasibility.” | “[Users] were recruited via [venture and/or researcher] contacts.” |

| Pre-post | “The study showed statistically significant gains in students’ content knowledge as well as [other outcomes], setting the stage for future explorations of product efficacy.” | “During the intervention, two teachers implemented [product]. Data were collected through a pre-post student content quiz and [other data sources].” |

| Correlational | “The correlation of product usage and student performance on [measure] show promise that the product has a potential impact on performance.” | “The study makes use of student level data collected from [school district] matched to student usage data from [product].” |

| Quasi-experimental | “The number of students performing near or above standard was higher in schools actively using [product] compared to non-[product] using schools.” | “Achievement on [standardized assessment] in schools using [product] was compared to matched comparison schools not using [product].” |

| Randomized control | “Students who used [product] at recommended dosage saw additional growth of [X]% compared to the control group, with an effect size of [Y]. | “A true, group-randomized, experimental design was used to control for most threats to internal validity. [Users] were randomly assigned into treatment and control conditions.” |

Before sharing your impact story, always start by determining the objective and the audience. The distribution channels must also be tailored to match the audience. Regardless of where the company fits on this spectrum, here are some guidelines for communicating:

- Avoid embellishment and be sure any statements or claims about the product are accurate and can be substantiated.

- Make optimal use of the channels the company can control, such as Twitter, Facebook, LinkedIn, the company website and blogs. Publish issue briefs or white papers with tight overviews. Use analytics to track reach and ROI.

- Use language that is accessible and friendly. Avoid excessive use of jargon, acronyms and complex language. Focus on top-line findings and keep it simple. Research need not be obtuse.

- Remember the power of first-person testimonials and storytelling to bring the research story to life.

- Share research findings at conferences about education research, ed tech or PreK-12 education – being sure to tailor the message for different audiences.

- Use third-party validators such as other researchers and thought leaders in education to amplify the message.

Conclusion

A Call to Action for the Broader Education Community

Our investments to support research have surely created value for our ventures and their products’ users. We hope this guide – which distills much of what we’ve learned about ed tech research – extends our collective knowledge to entrepreneurs beyond our portfolio, as well as to educators, researchers and funders who are investing resources into this important work.

- Entrepreneurs must make it a priority to collect evidence. Remember that regardless of your budget or the product’s maturity, many types of research can be valuable – and not just to you, so share what you are learning.

- Educators need to think of themselves as partners, not just users or customers. They must be clear about what they and their students need, and consider providing critical feedback to help entrepreneurs refine their products.

- Researchers should put themselves in the seat of ed tech developers and educators. The product development cycle is dynamic and fast paced, and classrooms are full of students who are literally changing and growing every day. Ed tech entrepreneurs need information that is timely and actionable.

- Funders need to fund ed tech research, and build it into their overall investment. Early-stage ed tech ventures have the double challenge of needing to show impact while having limited resources and capacity available to measure it.